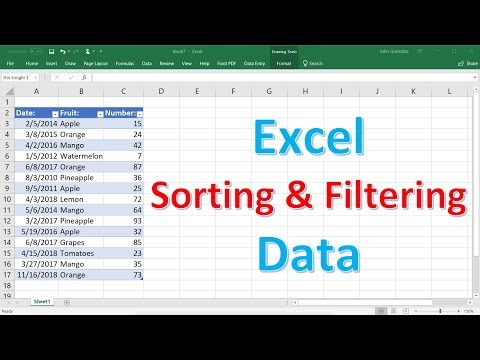

And in this tidyverse tutorial, we will learn how to use dplyr's filter() function to select or filter rows from a data frame with multiple examples. First, we will start with how to select rows of a dataframe based on a value of a single column or variable. And then we will learn how select rows of a dataframe using values from multiple variables or columns.

For this kind of filtering, we might want to reuse the simple Row Filter node seen before for the row filtering based on matching patterns in nominal columns. Since we are dealing with numerical values, here we use the "use range checking" option. Here, we need to specify the lower and the upper bound of the numeric interval. Remember that the lower and upper bound values are both included in the matching range. Along with filtering data rows on databases, KNIME offers the possibility to perform filtering operations on fully functional local big data environments such as Apache Spark. Rows that match the conditions are included in the output DataFrame.

We often want to operate only on a specific subset of rows of a data frame. The dplyr filter() function provides a flexible way to extract the rows of interest based on multiple conditions. The last two row filter nodes can come in handy if we intend to abstract our workflows. The Nominal Row Filter Configuration node provides a value filter configuration option to an encapsulating component's dialog. This node takes a data table and returns a filtered data table with only the selected values of a chosen column, as well as a flow variable containing those values.

This dialog is used to transform a data table by excluding rows based on a boolean expression. Only those rows matching the expression will be included in the data table. By adding a filter rows transformation, you can make sure that values you never want to include in your data table gets filtered out even before loading the data. With this node, you can implement all kinds of filtering rules, not only OR conditions. Custom filters let you write the fields, constants, functions, and operators for your desired filtering. Looker lets you build an expression that evaluates as true or false.

When you run the query, Looker only returns rows for which that condition is true. The SELECT command returns all the rows in a table unless we specify the limit as shown in the previous tutorial examples. In several situations, we need to filter the resulted rows to get the limited rows meeting certain conditions.

We can filter the rows using the WHERE clause by specifying the filter condition. We can also specify multiple conditions using the keywords AND, OR. The data frame rows can be subjected to multiple conditions by combining them using logical operators, like AND (&) , OR (|). The rows returning TRUE are retained in the final output. The filter() function is used to subset the rows of.data, applying the expressions in ...

To the column values to determine which rows should be retained. It can be applied to both grouped and ungrouped data (see group_by() andungroup()). However, dplyr is not yet smart enough to optimise the filtering operation on grouped datasets that do not need grouped calculations. For this reason, filtering is often considerably faster on ungrouped data. Here the filtering condition must return a Boolean value and rows are kept if the condition matches TRUE.

This Java Snippet Row Filter node follows the Java editor in the Java Snippet node. The following code might be one way to implement the rules from the Rule-based Row Filter node. The above-mentioned queries perform various filter operations on string values using single or multiple conditions. Make sure to use the single quotes while applying the filters for columns having the string data type. Any data frame column in R can be referenced either through its name df$col-name or using its index position in the data frame df[col-index].

The cell values of this column can then be subjected to constraints, logical or comparative conditions, and then data frame subset can be obtained. These conditions are applied to the row index of the data frame so that the satisfied rows are returned. Multiple conditions can also be combined using which() method in R. The which() function in R returns the position of the value which satisfies the given condition. Spark filter() or where() function is used to filter the rows from DataFrame or Dataset based on the given one or multiple conditions or SQL expression. You can use where() operator instead of the filter if you are coming from SQL background.

Another kind of row filtering might want to match RowID values. In this case, a Row Filter node with "Include rows by row ID" enabled option on the left of the configuration window might just solve the problem. Here the matching pattern can be represented by means of a regular expression, as a single rowID, and as the start of the RowID value. All of those options can be set to be case sensitive if the corresponding flag is enabled. The Geo-Coordinate Row Filter is an interactive row filter.

This means that in the node configuration window the user manually selects an area on the world map. Data rows with latitude and longitude coordinates in this area will be excluded/included from/into the input data set. In this post, we covered off many ways of selecting data using Pandas.

We used examples to filter a dataframe by column value, based on dates, using a specific string, using regex, or based on items in a list of values. We also covered how to select null and not null values, used the query function, as well as the loc function. We can filter the resulted query set returned by the select query using the WHERE clause.

We can specify either single or multiple conditions to filter the results. The keywords AND and OR can be used to apply multiple conditions. We can also use the keywords IN and NOT IN to restrict the column values to a set of values. In the above example, we selected rows of a dataframe by checking equality of variable's value. We can also use filter to select rows by checking for inequality, greater or less than a variable's value.

Let us learn how to filter data frame based on a value of a single column. In this example, we want to subset the data such that we select rows whose "sex" column value is "fename". In this tutorial, we'll look at how to filter pandas dataframe rows based on a list of values for a column.

This section provides examples to read filtered data from the table using the SELECT command with the WHERE clause. Use the below-mentioned query to create the user table having the id, first name, and last name columns to store user data. Similarly, we can also filter rows of a dataframe with less than condition. In this example below, we select rows whose flipper length column is less than 175. Let us see an example of filtering rows when a column's value is not equal to "something".

In the example below, we filter dataframe whose species column values are not "Adelie". In this tutorial, you have learned how to use the SQLite WHERE clause to filter rows in the final result set using comparison and logical operators. This section presents 3 functions - filter_all(), filter_if() and filter_at() - to filter rows within a selection of variables. Many functions assume that data is structured as tables in which rows are subsequent observations and columns are variables describing those observations. This data format is expected by packages such as dplyr, ggplot2 and others.

The filter() function from the dplyr package performs filtering. Its first argument is the dataset, and further arguments define logical conditions. Use a FILTER clause to select rows based on a numeric value by using basic operators or one or more Oracle GoldenGate column-conversion functions.

The filter() function is used to subset a data frame, retaining all rows that satisfy your conditions. To be retained, the row must produce a value of TRUE for all conditions. Note that when a condition evaluates to NAthe row will be dropped, unlike base subsetting with [. When you want to filter rows from DataFrame based on value present in an array collection column, you can use the first syntax. The below example uses array_contains() from Pyspark SQL functions which checks if a value contains in an array if present it returns true otherwise false.

Sometimes the filtering operation has the goal of identifying the records with missing values. Continuing our census analysis, the records in our data set might be identified by means of supposedly unique ID labels. A missing ID for a record might prevent the execution of following operations, like for example a join. Similarly, numerical row filtering rules can be implemented using a Java Snippet Row Filter node. Here, the filtering condition must return a Boolean value and rows are kept if the condition matches TRUE. The next argument is a mildly complex logical statement.

Here, we're telling the filter() function that we only want to return rows of data where the year variable is equal to 2001, and the city variable is equal to 'Abilene'. For each rule, you'll select a column, a filtering condition, and a matching value. Now we've selected a couple of columns to print out, let's use AWK to search for a specific thing – a number we know exists in the dataset. Note that if you specify what fields to print out, AWK will print the whole line that matches the search by default. After attending a bash class I taught for Software Carpentry, a student contacted me having troubles working with a large data file in R.

She wanted to filter out rows based on some condition in two columns. An easy task in R, but because of the size of the file and R objects being memory bound, reading the whole file in was too much for my student's computer to handle. She sent me the below sample file and how she wanted to filter it.

I chose AWK because it is designed for this type of task. It parses data line-by-line and doesn't need to read the whole file into memory to process it. Further, if we wanted to speed up our AWK even more, we can investigate AWK ports, such as MAWK, that are built for speed. The above options will only work if you can use the full variable content. In some cases though it will be needed to filter based on partial matches. In this case, we need a function that will evaluate regular expressions on strings and return boolean values.

Whenever the statement is TRUE the row will be filtered. Looker expressions can use as many fields, functions, and operators as your business logic requires. The more complex your condition, the more work your database must do to evaluate it; which may lengthen query times. In this example, we select rows or filter rows with bill length column with missing values. Often one might want to filter for or filter out rows if one of the columns have missing values.

With is.na() on the column of interest, we can select rows based on a specific column value is missing. When the column of interest is a numerical, we can select rows by using greater than condition. Let us see an example of filtering rows when a column's value is greater than some specific value. Select a column by clicking on it in the list and then click on the Insert Columns button, or double-click on the column to send it to the Expression field. You can narrow down the list of available columns by typing a part of a name in the "Type to search" field.

You can also enter an expression in the field, using the rules described on the Searching in TIBCO Spotfire page. The second argument is the key corresponding to column names, and the last argument are the values to be written into the specific columns. Rows in the new table are chosen as unique values in the other columns. The mutate() function from the dplyr package is used to conveniently create additional columns in a dataset by transforming existing columns. The result of this function contains rows the satisfy all of the conditions specified. When specifying the conditions, we can use column names from the dataset without additional links.

The Filter Rows transform allows you to filter rows based on conditions and comparisons. In this tutorial you'll learn how to subset rows of a data frame based on a logical condition in the R programming language. FILTER can only be used to filter rows or columns at one time.

In order to filter both rows and columns, use the return value of one FILTER function as range in another. Returns a filtered version of the source range, returning only rows or columns that meet the specified conditions. In this Spark article, you will learn how to apply where filter on primitive data types, arrays, struct using single and multiple conditions on DataFrame with Scala examples. This node gets a SQL query as input and produces a SQL query as output. The output SQL query corresponds to the input SQL query augmented with the row filter SQL statement.

In order to execute the SQL query and retrieve the data you need to attach a DB Reader node to the output of the DB Row Filter node. If the first matching rule has a TRUE outcome, the row will be selected for inclusion. Otherwise (i.e. if the first matching rule yields FALSE ) it will be excluded.

Note that on the very top of the node configuration window the column needs to be selected in the "Select column" menu. The Excluded list is populated with the unique values found in the selected column. Patterns to be matched can be moved from the Excluded list to the Included list through the Add/Remove arrows or through double-clicks. Besides "United States" and "United States of America", it is also very likely to find "USA" or "US" as birth place values, all indicating the same birth place.

In this case, even a wildcard based row filter might not be enough. Without resorting yet to regular expressions, we can use a few more complex rule based row filters. Please see the live demo below, which is built in Vanilla JavaScript. In this sample, please edit the AGE column values or click the buttons above the grid to add/remove/update rows.